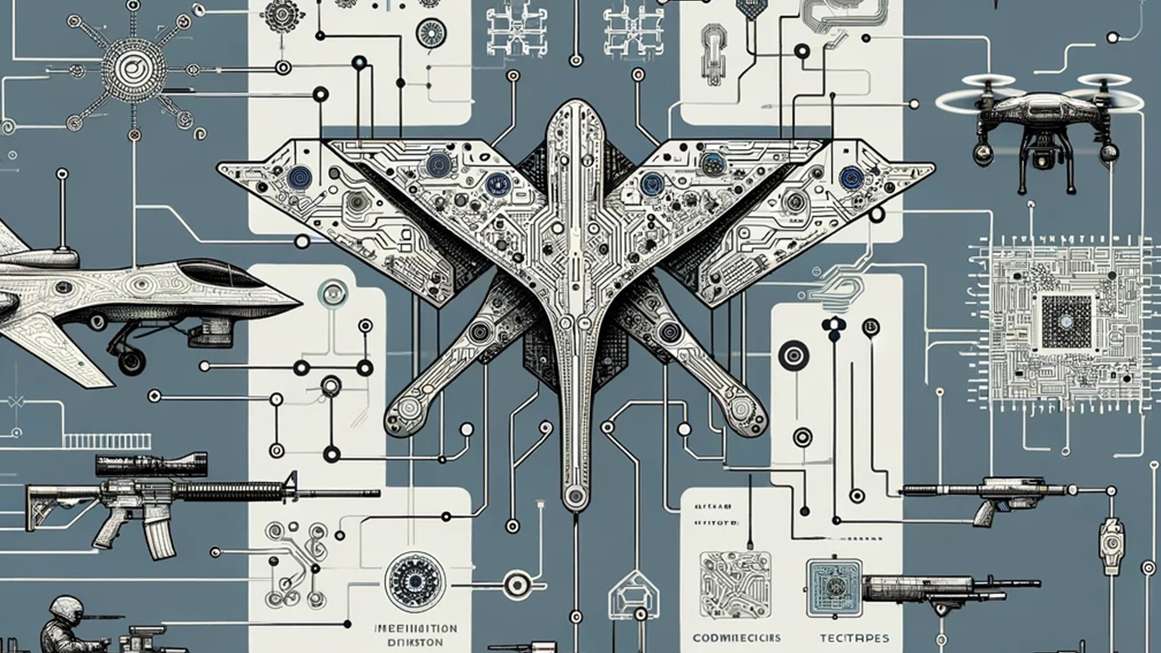

Everyone knows what the AI apocalypse will look like. movie war games and terminator A superintelligent computer emerges that controls weapons to destroy humanity. Fortunately, that scenario is unlikely to occur at this time. Launching America's nuclear missiles, powered by decades-old technology, requires a human with a physical key.

But AI is already killing people around the world in more boring ways. The U.S. and Israeli militaries have been using AI systems to research intelligence and plan airstrikes. bloomberg news, tutelarand +972 Magazine.

This type of software allows commanders to find and list targets much faster than human staff could do on their own. The attack is then carried out by human pilots using manned aircraft or remotely controlled drones. “It made the job easier because the machines did it cold,” said one Israeli intelligence officer. tutelar.

Furthermore, Turkish, Russian and Ukrainian arms manufacturers claim to have created “autonomous” drones that can attack targets even if the connection to the remote pilot is lost or disrupted. However, experts are skeptical about whether these drones actually kill autonomously.

In war, as in peace, AI is a tool that helps humans do what they want to do more efficiently. Human leaders will make decisions about war and peace as they always have. In the near future, most weapons will require a flesh-and-blood warrior to pull the trigger or push the button. AI allows those in the middle (the employees and intelligence analysts in the windowless rooms) to mark enemies for death with less effort, time, and thought.

“that Terminator “The image of killer robots obscures both conventional wisdom about how data-driven warfare and other areas of data-driven policing, profiling, border control, etc. already pose a serious threat,” says Lucy Suchman, a retired professor of physics and anthropology. He is a member of the International Committee for the Control of Robotic Arms.

Suchman argues that it is most helpful to understand AI as a “stationary machine” running on top of old surveillance networks. “The availability of large amounts of data and computing power allows these machines to learn how to select people and the types of patterns that governments care about,” she says. minority report rather terminator.

Even if humans review AI decisions, the speed of automated targeting “leaves less and less room for judgment,” Suchman says. “It’s a really bad idea to try to automate areas of human practice that are fraught with all kinds of problems.”

AI can also be used to reach goals that humans have already chosen. For example, Turkey's Kargu-2 attack drones can track targets even after the drone's connection to its operator is lost, according to a UN report on the 2021 Libyan fighting involving the Kargu-2.

The usefulness of “autonomous” weapons “really depends on the situation,” says Zachary Kallenborn, a policy fellow at George Mason University who specializes in drone warfare. For example, a ship's missile defense system may need to shoot down dozens of incoming rockets with little risk of hitting anything else. AI-controlled guns could be useful in such situations, but Kallenborn argues that “firing autonomous weapons at humans in an urban environment is a terrible idea” because it is difficult to distinguish between friendly troops, enemy fighters, and bystanders.

The scenario that really keeps Kallenborn up at night is “drone swarms,” networks of autonomous weapons giving instructions to each other, as an error could spread across dozens or even hundreds of killing machines.

Several human rights activists, including the Suchman Committee, are pushing for a treaty to ban or regulate autonomous weapons. The same goes for the Chinese government. The United States and Moscow have been reluctant to submit to international controls but have imposed internal limits on AI weapons.

The U.S. Department of Defense has issued regulations requiring human oversight of autonomous weapons. Russia appears to have turned off the AI capabilities of its Lancet-2 drones, according to an analysis cited by a military online magazine. defense destruction.

The same impulses that drove the development of AI warfare appear to be driving its limits as well. The thirst for control of human leaders.

“Military commanders want to manage very carefully how much violence they inflict, because ultimately they only do so to support larger political goals,” Kallenborn says.