You've probably heard that a picture is worth a thousand words. But can a large-scale language model (LLM) process a picture even if it has never seen the image before?

As a result, language models trained purely on text have a solid understanding of the visual world. They can write image rendering code to generate complex scenes with interesting objects and compositions, and even if that knowledge is not used properly, LLM can improve the images. Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) observed this when they prompted a language model to modify its own code for different images. The system improved simple clip art drawings for each query.

Visual knowledge of these language models is gained through the way concepts such as shapes and colors are described over the Internet, either in language or code. Given instructions such as “Draw a parrot in the jungle,” the user jogs the LLM to consider what it has read in the previous description. To assess how much visual knowledge LLMs have, the CSAIL team organized a “visual check” for LLMs. In other words, we used a “visual aptitude dataset” to test the model’s ability to draw, recognize, and self-correct these concepts. The researchers collected the final drafts of each of these illustrations and trained a computer vision system to identify the content of the actual photo.

“We essentially train the visual system without using visual data directly.” said Tamar Lott Shaham, co-senior author of the study and MIT Electrical Engineering and Computer Science (EECS) postdoc at CSAIL. “Our team wrote image-rendering code that queries a language model to generate data, and then trained the visual system to evaluate natural images. We were inspired by questions about how visual concepts are expressed through other mediums, such as text. To represent visual knowledge, LLM can use code as a common basis for text and visuals.”

To build this dataset, the researchers first queried the model to generate code for a variety of shapes, objects, and scenes. They then compiled that code to render a simple digital illustration, such as a row of bicycles, showing that LLM understands spatial relationships well enough to draw a two-wheeler in a horizontal row. In another example, the model combined two random concepts to generate a car-shaped cake. The language model also demonstrated the ability to create visual effects by generating glowing light bulbs.

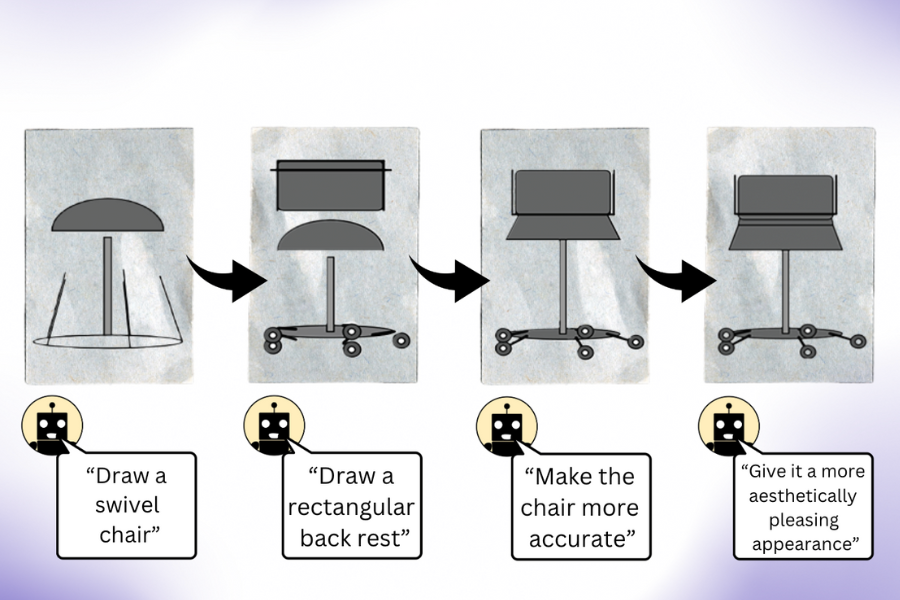

“Our research shows that when you query LLM (multimodal no prior learning) to create an image, it knows a lot more than it meets the eye,” says co-author Pratyusha Sharma, EECS PhD student and CSAIL member. “Let's say you ask it to draw a chair. The model knows other things about the furniture that might not have been rendered out of the box, so users can query the model to improve the visuals it produces at each iteration. Surprisingly, the model renders We can make significant improvements to the code and iteratively enrich the picture.”

Researchers collected these illustrations and used them to train a computer vision system that can recognize objects in real-world photographs (even if they have never been seen before). Using this synthetic text-generated data as the sole reference point, the system outperformed other procedurally generated image datasets trained on real-world photographs.

The CSAIL team believes that combining LLM’s hidden visual knowledge with the artistic capabilities of other AI tools, such as diffusion models, could also help. Systems like Midjourney lack the know-how to continually adjust the fine details of an image, making it difficult to handle requests like reducing the number of cars in a photo or placing an object behind another car. If LLM had sketched out the requested changes to the diffusion model in advance, the resulting edits could have been more satisfying.

The irony, as Rott Shaham and Sharma acknowledge, is that LLM sometimes fails to recognize the same concepts that it can draw. This became apparent when the model misidentified images that humans had recreated in the dataset. These different representations of the visual world likely triggered the language model’s misunderstandings.

While the models had difficulty recognizing these abstract depictions, they showed creativity in drawing the same concept differently each time. When researchers asked LLM to draw concepts such as strawberries and arcade multiple times, they generated drawings of different shapes and colors from different angles, which allowed the model to learn more about the visual concepts (rather than just reciting previously seen examples). It was implied that it was possible to have mental images.

The CSAIL team believes that this procedure can serve as a baseline for evaluating how well generative AI models can train computer vision systems. Researchers are also looking to expand their work by challenging language models. In a recent study, the MIT group noted that it is difficult to further investigate the sources of visual knowledge because they do not have access to the training sets of the LLMs they used. In the future, they plan to explore teaching an even better vision model by putting the LLM directly to work.

Sharma and Rott Shaham contributed to the paper along with former CSAIL affiliate Stephanie Fu '22, MNG '23, and EECS PhD students Manel Baradad, Adrián Rodríguez-Muñoz '22, and Shivam Duggal, all of whom are CSAIL affiliates. They also contributed to the paper along with MIT Associate Professors Phillip Isola and Antonio Torralba. Their work was supported in part by grants from the MIT-IBM Watson AI Lab, the LaCaixa Fellowship, the Zuckerman STEM Leadership Program, and the Viterbi Fellowship. They are presenting their paper this week at the IEEE/CVF Computer Vision and Pattern Recognition Conference.