Autonomous driving has long relied on machine learning to plan routes and detect objects, but some companies and researchers now believe that generative AI – models that collect surrounding data and generate predictions – could help take autonomy to the next level. I'm sure it will. Waabi competitor Wayve last year launched a similar model trained based on the videos its vehicles collect.

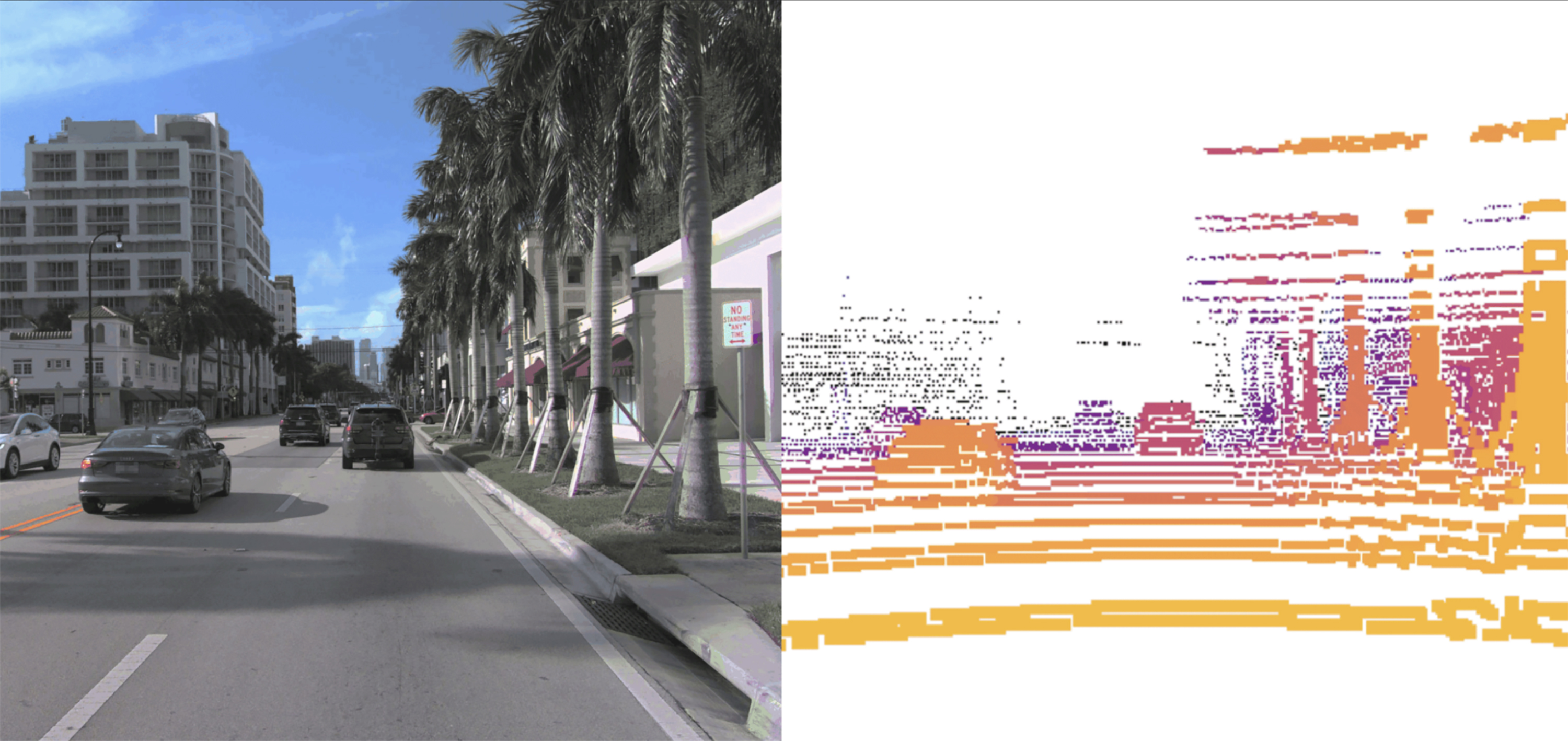

Waabi's models work in a similar way to image or video generators like OpenAI's DALL-E and Sora. It takes a point cloud of LiDAR data, visualizing a 3D map of the car's surroundings, and breaks it into chunks, similar to how an image generator breaks a photo into pixels. Based on the training data, Copilot4D predicts how every point in the LiDAR data will move. If you do this consistently, you can generate predictions 5 to 10 seconds into the future.

Waabi is one of a handful of self-driving companies, including competitors Wayve and Ghost, that describe their approach as “AI first.” For Urtasun, this means designing systems that learn from data, rather than systems that must be taught to react to specific situations. The cohort is confident that their method can speed up the time it takes to road-test autonomous vehicles, a topic raised after an October 2023 accident in San Francisco in which a Cruze robotaxi dragged away a pedestrian.

Waabi differs from its competitors in building generative models for LiDAR rather than cameras.

“If you want to be a level 4 player, LiDAR is a must,” Urtasun said, referring to the level of automation where cars do not require human attention to drive safely. Cameras are good at showing what the car is seeing, she says, but they're not good at measuring distances or understanding the geometry around the car.

Waabi's models can generate videos showing what the car sees through its LiDAR sensors, but those videos aren't used for training purposes in the company's driving simulator, which it uses to build and test driving models. This is to ensure that hallucinations that occur in Copilot4D are not learned in the simulator.

Bernard Adam Lange, a Stanford doctoral student who built and studied a similar model, says that while the underlying technology is not new, this is the first time he has seen a generative LiDAR model expand beyond the confines of the lab and into commercial use. . Models like these generally help the “brains” of self-driving cars make faster and more accurate inferences, he says.

“What’s transformative is the scale,” he says. “We hope that these models can be leveraged for downstream tasks, such as detecting objects and predicting where people or objects will move next.”