Since launching our generative AI platform just a few months ago, we’ve seen, heard, and experienced intense, accelerated AI innovation and incredible innovation. As a long-time machine learning advocate and industry leader, I've seen many breakthroughs, perfectly represented by the continued interest in ChatGPT, which launched nearly a year ago.

Just as ecosystems thrive on biological diversity, AI ecosystems also benefit from multiple providers. Interoperability and system flexibility have always been key to mitigating risk so organizations can adapt and continue to deliver value. However, the unprecedented pace of evolution of generative AI has made selectivity a critical feature.

The market is changing so quickly that there are no definitive predictions for now or the near future. This is one of the core philosophies behind the innovative new generative AI capabilities announced in our recent fall launch, and a statement that has resonated with our customers.

Relying too heavily on one AI provider can slow down innovation and pose risks. There are already over 180 different open source LLM models. The pace of change is evolving faster than teams can adapt.

DataRobot's philosophy is that organizations should build flexibility into their generative AI strategies based on performance, robustness, cost, and appropriateness for the specific LLM tasks being deployed.

As with all skills, many LLMs have pros and cons or are better suited to certain jobs. Some LLMs may excel at certain natural language tasks, such as text summarization, provide more diverse text generation, or may be cheaper to operate. As a result, many LLMs can be best-in-class in different but useful ways. A technology stack that provides the flexibility to choose or mix these products allows organizations to maximize the value of AI in a cost-effective manner.

DataRobot operates as an open, integrated intelligence layer that allows organizations to compare and select the generative AI components that are right for them. This interoperability enhances generative AI output, improves operational continuity, and reduces single-provider dependency.

With this strategy, operational processes will not be affected even if the supplier experiences an internal disruption. It also allows organizations to manage costs more efficiently by enabling them to make cost-performance trade-offs around LLM.

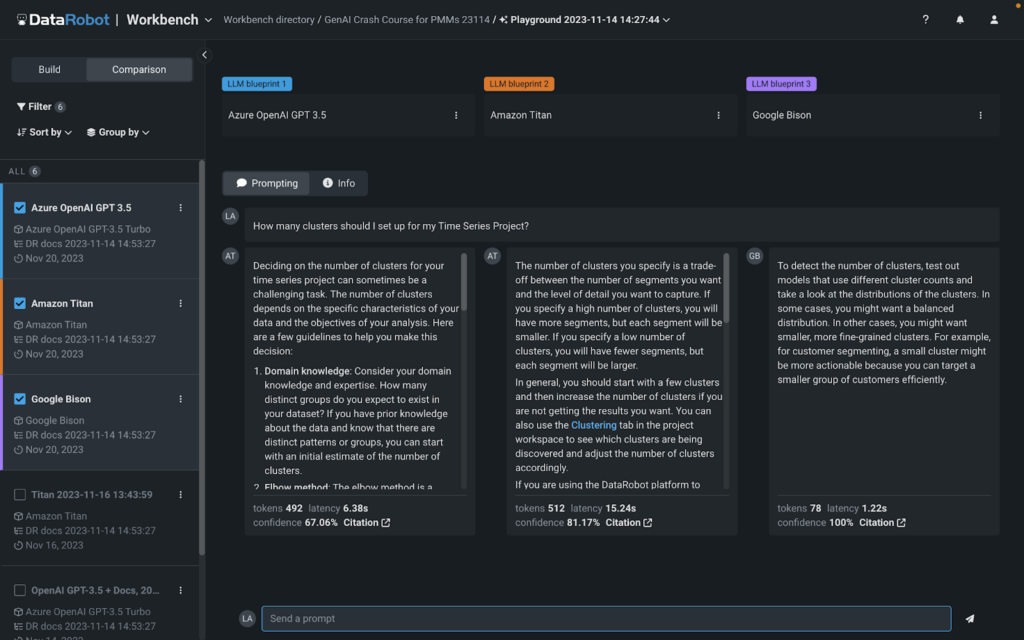

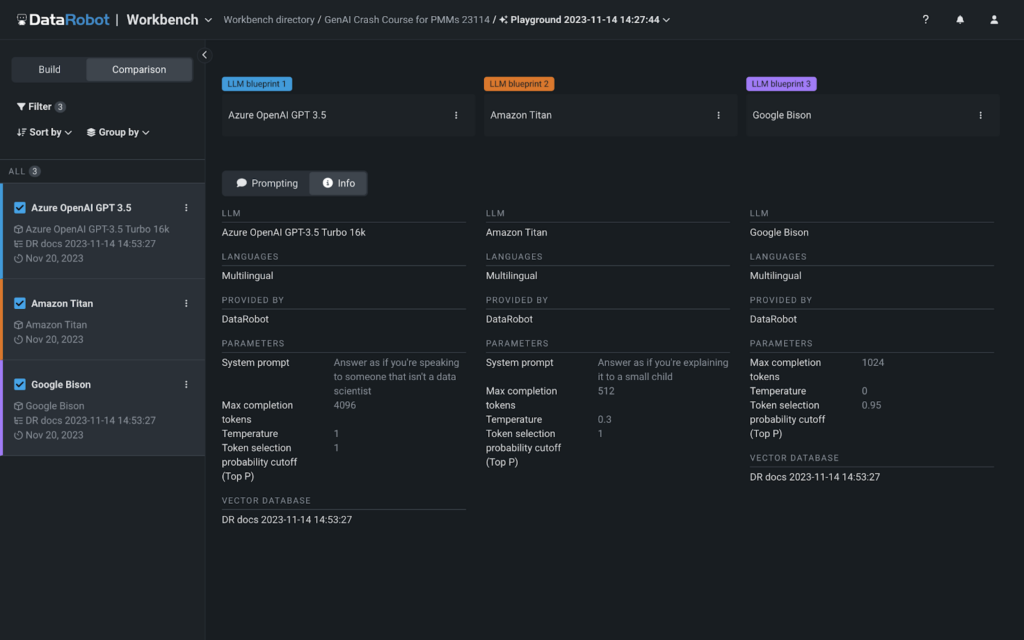

During our fall launch, we announced our new multi-provider LLM Playground. The first-of-its-kind visual interface provides native access to Google Cloud Vertex AI, Azure OpenAI, and Amazon Bedrock models, making it easy to compare and experiment with different generative AI 'recipes'. You can use the LLM built into our playground or bring your own. Access to these LLMs is available immediately during experiments, so there are no additional steps required to start building GenAI solutions at DataRobot.

The new LLM Playground makes it easy to try, test, and compare different GenAI “recipes” in terms of style/tone, cost, and relevance. We've made it easy to evaluate any combination of base models, vector databases, chunking strategies, and prompting strategies. You can do this if you want to build using the platform UI or if you want to build using a notebook. LLM Playground makes it easy to transition from code to visualizing your experiments side-by-side.

DataRobot also allows you to hot-swap base components (e.g. LLM) without interrupting production as your organization's needs change or the market evolves. This allows you to tailor your generative AI solution to your exact requirements while maintaining technical autonomy using all best-of-breed components.

Below you can see exactly how easy it is to compare different generative AI ‘recipes’ using the LLM Playground.

Once you have chosen the 'recipe' that suits you, you can quickly and easily move on to it and apply the vector database and prompt strategies to your production. Once you go into production, you'll get complete end-to-end generative AI lineage, monitoring, and reporting capabilities.

DataRobot's generative AI products enable organizations to easily select the right tool for the job and securely extend internal data to LLM while measuring output on toxicity, veracity, and cost, among other KPIs. We like to say, “We are not building an LLM, we are solving the problem of trust in generative AI.”

The generative AI ecosystem is complex and changing every day. At DataRobot, we ensure a flexible and resilient approach. Think of this as an insurance policy, preventing stagnation in an ever-evolving technology landscape, ensuring data scientists' agility and CIOs' peace of mind. Because the reality is that an organization’s strategy should not be limited to a single supplier’s worldview, pace of innovation, or internal turmoil. It’s about building the resilience and speed to evolve your organization’s generative AI strategy so it can adapt quickly as the market evolves.

You can learn more about other ways to solve the ‘trust problem’ by watching our on-demand fall launch event.

About the author

Ted Kwartler is DataRobot's field CTO. Ted sets the product strategy for explicable and ethical use of data technology. Ted brings unique insight and experience leveraging data, business acumen, and ethics to his current and previous roles at Liberty Mutual Insurance and Amazon. In addition to four DataCamp courses, he teaches graduate courses at Harvard Extension School and is the author of “Text Mining in Practice with R.” Ted is an advisor to the U.S. Government's Bureau of Economic Affairs, serving on a congressionally mandated committee called the “Data Advisory Committee to Build Evidence” that advocates for data-driven policy.

Meet Ted Kwatler