Imagine driving a self-driving car through a tunnel and unknowingly causing a collision, stopping traffic ahead. Typically, you have to rely on the car in front of you to know when to start braking. But what if your car could see around the vehicle ahead and apply the brakes faster?

Researchers at MIT and Meta have developed computer vision technology that could one day allow self-driving cars to do just that.

They introduced a method that uses images from a single camera position to create a physically accurate 3D model of the entire scene, including areas blocked from view. Their technology uses shadows to determine what is in obscured parts of the scene.

They call their approach PlatoNeRF, based on Plato's Allegory of the Cave from the Greek philosopher's “Republic.” This is a work in which prisoners trapped in a cave discern the reality of the outside world through the shadows cast on the cave wall.

PlatoNeRF combines LiDAR (light detection and ranging) technology with machine learning to create more accurate 3D shape reconstructions than existing AI techniques. PlatoNeRF is also better at smoothly reconstructing scenes where shadows are difficult to see, such as scenes with high ambient lighting or dark backgrounds.

In addition to improving the safety of self-driving cars, PlatoNeRF can make AR/VR headsets more efficient by allowing users to model the geometry of a room without having to move around to take measurements. It can also help warehouse robots find items faster in cluttered environments.

“Our core idea was to combine two tasks previously done in different fields: multi-bounce lidar and machine learning. It turns out that by combining the two, we can find many new opportunities to explore and make the most of both worlds,” says Tzofi Klinghoffer, an MIT graduate student in the MIT Affiliate Department of Media Arts and Sciences. Media Lab, lead author of the PlatoNeRF paper.

Klinghoffer wrote the paper with his advisor, Ramesh Raskar, associate professor of media arts and sciences and leader of MIT's Camera Culture Group. Rakesh Ranjan, lead author and director of AI research at Meta Reality Labs; There are also Siddharth Somasundaram from MIT, Xiaoyu Xiang, Yuchen Fan, and Christian Richardt from Meta. This research will be presented at the Computer Vision and Pattern Recognition Conference.

shed light on the problem

Reconstructing an entire 3D scene from a single camera viewpoint is a complex problem.

Some machine learning approaches use generative AI models to guess what's in occluded areas, but these models can create hallucinations of objects that aren't actually there. Other approaches try to use shadows in color images to infer the shape of hidden objects, but these methods can struggle when the shadows are difficult to see.

For PlatoNeRF, MIT researchers built on this approach using a new sensing method called single-photon LiDAR. Lidar maps 3D scenes by emitting pulses of light and measuring how long it takes for that light to reflect back to the sensor. Single-photon lidar can detect individual photons, providing higher resolution data.

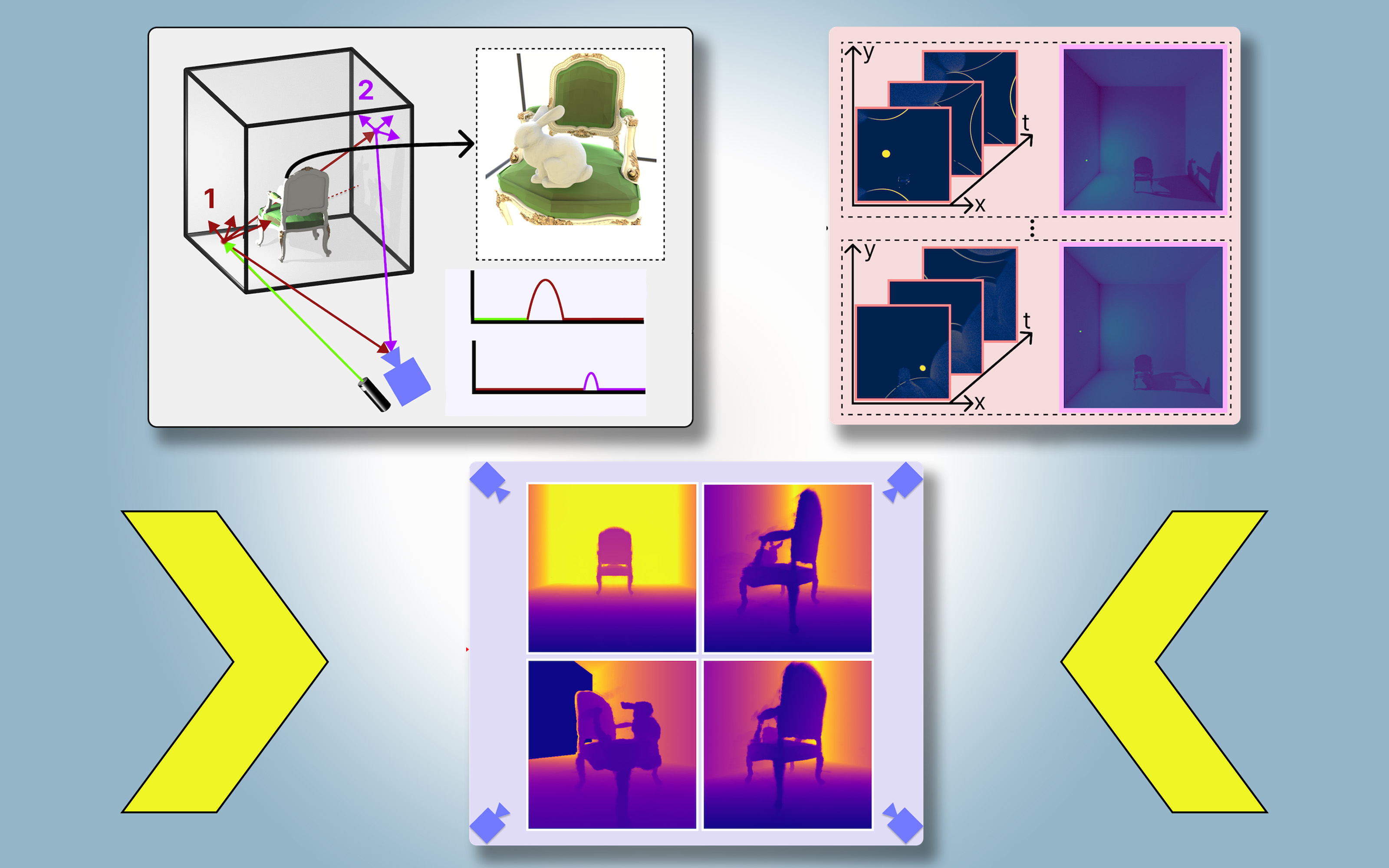

Researchers use single-photon lidar to illuminate targeted points in a scene. Some light reflects off that point and returns directly to the sensor. However, most of the light is scattered and reflected by other objects before returning to the sensor. PlatoNeRF relies on this second reflection of light.

PlatoNeRF calculates how long it takes for light to bounce twice and then return to the LiDAR sensor to capture additional information about the scene, including depth. The second light reflection also contains information about the shadow.

The system determines which points are in shadow (because there is no light) by tracing secondary rays, which are rays that reflect from the target point to other points in the scene. PlatoNeRF can infer the geometry of hidden objects based on the positions of these shadows.

LiDAR sequentially illuminates 16 points to capture multiple images that are used to reconstruct the entire 3D scene.

“Each time you light up a point in the scene, a new shadow is created. Because you have these different lighting sources, you're cutting out areas that are obscured and areas that are beyond the eye because there's a lot of rays shooting out around you,” says Klinghoffer.

a successful combination

The core of PlatoNeRF combines multi-bounce lidar with a special type of machine learning model known as Neural Radiation Field (NeRF). NeRF encodes the geometry of a scene into the weights of a neural network, giving the model the powerful ability to interpolate or estimate new perspectives of the scene.

Klinghoffer says this interpolation capability, when combined with multi-bounce LiDAR, allows for very accurate scene reconstruction.

“The biggest challenge was figuring out how to combine the two. “With multi-bounce LiDAR, we had to really think about the physics of how light travels and how to model that through machine learning,” he says.

They compared PlatoNeRF with two common alternative methods. One method uses only LiDAR and the other method uses only NeRF with color images.

They found that their method can outperform both techniques, especially when the resolution of the LiDAR sensor is low. This will make their approach more practical for deployment in the real world, where low-resolution sensors are commonly used in commercial devices.

“About 15 years ago, our group invented the first camera that could ‘see’ around corners, working by exploiting multiple light reflections, or ‘light echoes.’ These techniques used special lasers and sensors and three light reflections. Since then, LiDAR technology has become more mainstream, leading to research into cameras that can see through fog. This new work uses only two light reflections. This means that the signal-to-noise ratio is very high and the quality of the 3D reconstruction is impressive,” says Raskar.

In the future, the researchers want to track more than one light reflection to see how they can improve scene reconstruction. They are also interested in applying deeper learning techniques and combining PlatoNeRF with color image measurements to capture texture information.

“Camera images of shadows have long been studied as a means of 3D reconstruction, but this work revisits the issue in the context of LiDAR, demonstrating significant improvements in the accuracy of reconstructed hidden geometry. “This research shows how clever algorithms can enable amazing capabilities when combined with common sensors, including the LiDAR systems that many of us now carry in our pockets,” says David Lindell, assistant professor in the Department of Computer Science at the University of Toronto. who did not participate in this work.