Large language models (LLMs) are becoming increasingly useful for programming and robotics tasks, but for more complex reasoning problems, the gap between these systems and humans widens. Without the ability to learn new concepts like humans do, these systems are unable to form good abstractions (essentially high-level representations of complex concepts that skip over less important details), making them jump when asked to perform more sophisticated tasks. It's possible.

Luckily, researchers at the MIT Computer Science and Artificial Intelligence Laboratory (CSAIL) have discovered a treasure trove of abstractions within natural language. In three papers to be presented this month at the International Conference on Learning Representations, the group explores how everyday words can become a rich source of context for language models, building better comprehensive representations for code synthesis, AI planning, and robot navigation. It shows how helpful it is. manipulation.

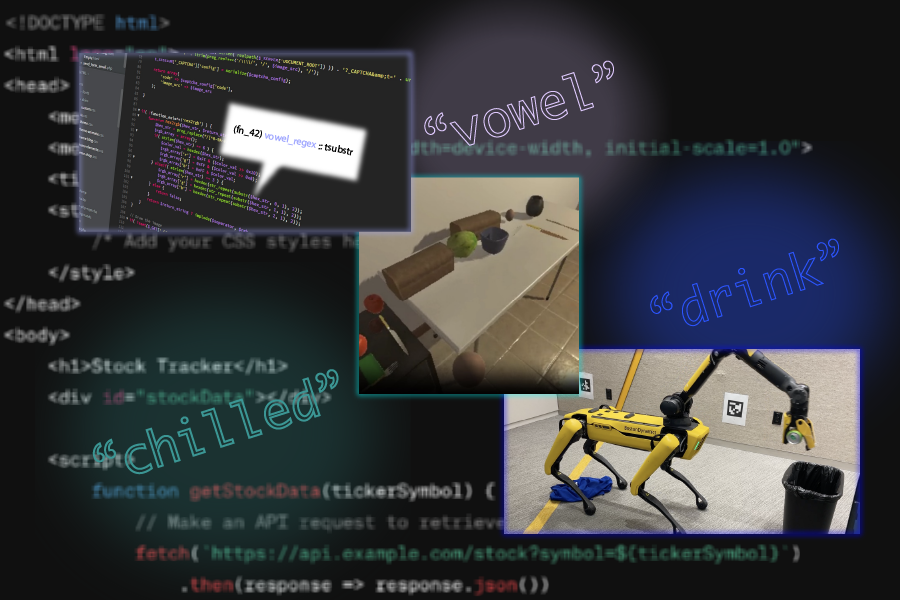

Three separate frameworks build libraries of abstractions for a given task. LILO (Library Derivation of Language Observations) can synthesize, compress, and document code. Action Domain Acquisition (Ada) explores sequential decision-making in artificial intelligence agents. Language-Based Abstraction (LGA) helps robots better understand their environment to develop more feasible plans. Each system is a neurosemiotic method, a type of AI that blends human-like neural networks and program-like logical components.

LILO: A neural symbolic framework for writing code

Large-scale language models allow us to quickly write solutions for small coding tasks, but they are not yet capable of designing entire software libraries like those written by human software engineers. To further advance software development capabilities, AI models require code to be refactored (deleted and combined) into concise, readable, and reusable program libraries.

Previously developed refactoring tools, such as the MIT-led Stitch algorithm, can automatically identify abstractions, so in a nod to the Disney film “Lilo & Stitch,” CSAIL researchers combined this algorithmic refactoring approach with LLM. Neural Symbolic Methods LILO uses standard LLM to write code and then combines it with Stitch to find abstractions that are comprehensively documented in the library.

LILO's unique emphasis on natural language allows the system to perform tasks that require human-like common sense knowledge, such as identifying and removing all vowels from a code string and drawing a snowflake. In both cases, the CSAIL system outperformed both standalone LLM and MIT's previous library learning algorithm called DreamCoder, indicating its ability to build a deeper understanding of words within prompts. These encouraging results indicate that LILO can support tasks such as writing programs to manipulate documents such as Excel spreadsheets, helping AI answer questions about visuals, and drawing 2D graphics.

“Language models prefer to use functions named in natural language,” says Gabe Grand SM ’23, a doctoral student in electrical engineering and computer science at MIT, a CSAIL affiliate, and lead author of the study. “Our work creates simpler abstractions for language models and assigns each model a natural language name and document, making code more interpretable for programmers and improving system performance.”

When prompted for a programming task, LILO first uses LLM to quickly suggest a solution based on the data it was trained on, and then the system slowly searches for external solutions more thoroughly. Next, Stitch efficiently identifies common structures within your code and derives useful abstractions. They are then automatically named and documented by LILO, resulting in simplified programs that can be used to solve more complex tasks in the system.

The MIT framework writes programs in a domain-specific programming language, such as Logo, a language developed at MIT in the 1970s to teach programming to children. Extending the automated refactoring algorithm to handle more general programming languages such as Python will be the focus of future research. Nonetheless, their work represents a step forward in how language models can facilitate increasingly sophisticated coding activities.

Ada: Natural language guides AI task planning.

Like programming, AI models that automate multi-step tasks in home- and command-based video games lack abstraction. Imagine you're cooking breakfast and asking your roommate to bring hot eggs to the table. Your roommate will intuitively abstract your background knowledge about cooking in the kitchen into a series of tasks. In contrast, an LLM trained on similar information would still have difficulty inferring what is needed to create a flexible plan.

The CSAIL-led “Ada” framework, named after the famous mathematician Ada Lovelace, considered by many to be the world's first programmer, is solving this problem by developing a library of useful plans for virtual kitchen chores and games. This method learns potential tasks and their natural language descriptions, and then a language model suggests task abstractions from this dataset. Human operators can score the best plans and filter them into a library to implement the best actions into hierarchical plans for different tasks.

“Traditionally, large-scale language models have struggled to perform more complex tasks because of problems such as reasoning about abstractions,” says Ada principal investigator Lio Wong, a graduate student in brain and cognitive sciences at MIT, a CSAIL affiliate, and co-author of LILO. . “But by combining the tools that software engineers and roboticists use with the LLM, we can solve difficult problems such as decision-making in virtual environments.”

When researchers integrated the widely used large language model GPT-4 into Ada, the system completed more work on kitchen simulators and Mini Minecraft than the AI decision-making criteria “code as policy.” Ada used background information hidden in natural language to understand how to put cold wine in a cabinet and make a bed. The results showed remarkable task accuracy improvements of 59% and 89%, respectively.

With this success, the researchers hope to generalize their work to real homes, with the hope that Ada can help with other household tasks and assist multiple robots in the kitchen. Currently, the main limitation is the use of a generic LLM. Therefore, the CSAIL team seeks to apply a more robust and fine-tuned language model that can support a wider range of initiatives. Wong and her colleagues are considering combining Ada with Language Guided Abstraction (LGA), a new robot manipulation framework from CSAIL.

Language-based abstraction: Representing robot tasks

Andi Peng SM '23, an MIT electrical engineering and computer science graduate student and CSAIL affiliate, and her co-authors designed a way for machines to interpret their surroundings like humans, removing unnecessary details in complex environments such as factories or kitchens. I did. Like LILO and Ada, LGA brings a new focus to how natural language can lead us to better abstractions.

In these unstructured environments, robots need common sense about what they do, even if they have basic training beforehand. For example, if you ask a robot to hand you a bowl, the machine needs a general understanding of what features are important in its surroundings. From there, you can deduce how to give them the item you want.

For LGA, humans first provide a pre-trained language model with a general task description using natural language, such as “Get my hat.” The model then translates this information into an abstraction of the essential elements needed to perform this task. Finally, with some demonstration, an imitation policy trained can implement these abstractions to guide the robot to grab the desired item.

Previous work required humans to take extensive notes on different manipulation tasks to pre-train the robot, which can be expensive. Surprisingly, LGA guides a language model to produce abstractions similar to human annotators, but in less time. To account for this, the LGA developed a robotics policy that would allow Boston Dynamics' Spot quadruped to pick up fruit and place it in a recycling bin. These experiments demonstrate how methods developed at MIT can scan the world and develop effective plans in unstructured environments, potentially guiding autonomous vehicles on the road and robots working in factories and kitchens.

“What we often ignore in robotics is how much data needs to be refined to make robots useful in the real world,” Peng says. “Beyond simply memorizing what's in an image to train a robot to perform a task, we wanted to leverage computer vision and captioning models in conjunction with language. “By generating text captions from what the robot sees, we show that language models can essentially build important world knowledge about robots.”

The challenge with LGA is that some behaviors cannot be described in language, so certain tasks are not properly specified. To expand how features are represented in the environment, Peng and colleagues are considering incorporating a multimodal visualization interface into their work. Meanwhile, LGA provides a way for robots to get a better feel for their surroundings as they lend a helping hand to humans.

“An exciting frontier” in AI

“Library learning is one of the most exciting frontiers of artificial intelligence, providing a path to discovering and reasoning about compositional abstractions,” said Robert Hawkins, an assistant professor at the University of Wisconsin-Madison who was not involved in the paper. . Hawkins points out that previous techniques for exploring this topic are “too computationally expensive to be used on a large scale” and that many of the languages being created have problems with the lambdas or keywords used to describe new features. “They tend to produce an opaque ‘lambda salad’ with a bunch of functions that are difficult to interpret. These recent papers demonstrate powerful methods for advancing large-scale language models by placing them in an interactive loop using symbolic search, compression, and planning algorithms. This will allow you to quickly have a library that is more interpretable and adaptable to the task at hand.”

By building a library of high-quality code abstractions using natural language, the three neural symbolic methods will enable language models to more easily solve more sophisticated problems and environments in the future. A deeper understanding of the exact keywords within the prompts offers a way forward in developing more human-like AI models.

MIT CSAIL members are senior authors on each paper. Joshua Tenenbaum, professor of brain and cognitive sciences at LILO and Ada; Julie Shah, LGA Aerospace Department Chair; For all three, Jacob Andreas, associate professor of electrical engineering and computer science. Additional MIT authors are all doctoral students. Maddy Bowers and Theo X. Olausson from LILO, Jiayuan Mao and Pratyusha Sharma from Ada, and Belinda Z. Li from LGA. Muxin Liu of Harvey Mudd College was a co-author of LILO. Zachary Siegel of Princeton University, Jaihai Feng of the University of California, Berkeley, and Noa Korneev of Microsoft were co-authors of Ada. and Ilia Sucholutsky, Theodore R. Sumers, and Thomas L. Griffiths of Princeton were co-authors of the LGA.

LILO and Ada were supported in part by MIT Quest for Intelligence, MIT-IBM Watson AI Lab, Intel, the U.S. Air Force Office of Scientific Research, the Defense Advanced Research Projects Agency, and the U.S. Office of Naval Research. , the latter project is also receiving funding from the Center for Brain, Mind and Machines. LGA has received funding from the National Science Foundation, Open Philanthropy, the Natural Sciences and Engineering Research Council of Canada, and the United States Department of Defense.